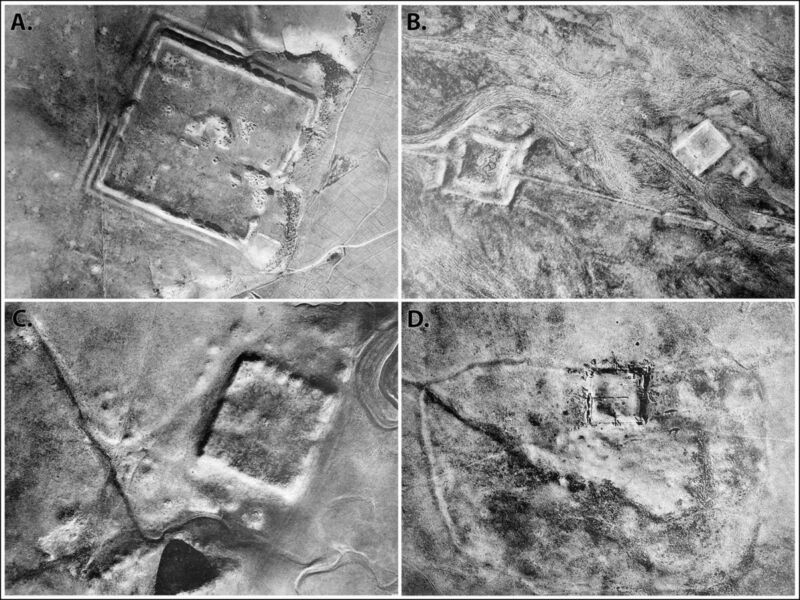

Enlarge / Spy satellite images taken by the CIA during the Cold War have revealed hundreds of Roman forts across the Fertile Crescent. (credit: J. Casana et al./US Geological Survey)

Back in the early days of aerial archaeology, a French Jesuit priest named Antoine Poidebard flew a biplane over the northern Fertile Crescent to conduct one of the first aerial surveys. He documented 116 ancient Roman forts spanning what is now western Syria to northwestern Iraq and concluded that they were constructed to secure the borders of the Roman Empire in that region.

Now, anthropologists from Dartmouth have analyzed declassified spy satellite imagery dating from the Cold War, identifying 396 Roman forts, according to a recent paper published in the journal Antiquity. And they have come to a different conclusion about the site distribution: the forts were constructed along trade routes to ensure the safe passage of people and goods.

Poidebard is a fascinating historical figure. A former World War I pilot, he later became a priest and joined the French Levant forces, helping pioneer the use of aerial photography as an archaeological surveying tool to discover and record sites of interest. (Previously, hot air balloons, scaffolds, or attaching cameras to kites were the primary means of gaining aerial context.) For his mapping missions, Poidebard clocked thousands of hours flying over Syria, as well as Algeria and Tunisia along the Mediterranean coast. He published his catalog of ancient Roman forts in his 1934 book, The Trace of Rome in the Syrian Desert, including some of the largest and best-known sites, including Sura, Resafa, and Ain Sinu.

Today's links

- Leaving Twitter had no effect of NPR's traffic: The brittle equilibrium of stage three enshittification.

- Hey look at this: Delights to delectate.

- This day in history: 2003, 2013, 2018

- Colophon: Recent publications, upcoming/recent appearances, current writing projects, current reading

Leaving Twitter had no effect of NPR's traffic (permalink)

Enshittification is the process by which a platform lures in and then captures end users (stage one), who serve as bait for business customers, who are also captured (stage two), whereupon the platform rug-pulls both groups and allocates all the value they generate and exchange to itself (stage three):

https://pluralistic.net/2023/01/21/potemkin-ai/#hey-guys

Enshittification isn't merely a form of rent-seeking – it is a uniquely digital phenomenon, because it relies on the inherent flexibility of digital systems. There are lots of intermediaries that want to extract surpluses from customers and suppliers – everyone from grocers to oil companies – but these can't be reconfigured in an eyeblink the way that purely digital services can.

A sleazy boss can hide their wage-theft with a bunch of confusing deductions to your paycheck. But when your boss is an app, it can engage in algorithmic wage discrimination, where your pay declines minutely every time you accept a job, but if you start to decline jobs, the app can raise the offer:

https://pluralistic.net/2023/04/12/algorithmic-wage-discrimination/#fishers-of-men

I call this process "twiddling": tech platforms are equipped with a million knobs on their back-ends, and platform operators can endlessly twiddle those knobs, altering the business logic from moment to moment, turning the system into an endlessly shifting quagmire where neither users nor business customers can ever be sure whether they're getting a fair deal:

https://pluralistic.net/2023/02/19/twiddler/

Social media platforms are compulsive twiddlers. They use endless variation to lure in – and then lock in – publishers, with the goal of converting these standalone businesses into commodity suppliers who are dependent on the platform, who can then be charged rent to reach the users who asked to hear from them.

Facebook designed this playbook. First, it lured in end-users by promising them a good deal: "Unlike Myspace, which spies on you from asshole to appetite, Facebook is a privacy-respecting site that will never, ever spy on you. Simply sign up, tell us everyone who matters to you, and we'll populate a feed with everything they post for public consumption":

https://lawcat.berkeley.edu/record/1128876

The users came, and locked themselves in: when people gather in social spaces, they inadvertently take one another hostage. You joined Facebook because you liked the people who were there, then others joined because they liked you. Facebook can now make life worse for all of you without losing your business. You might hate Facebook, but you like each other, and the collective action problem of deciding when and whether to go, and where you should go next, is so difficult to overcome, that you all stay in a place that's getting progressively worse.

Once its users were locked in, Facebook turned to advertisers and said, "Remember when we told these rubes we'd never spy on them? It was a lie. We spy on them with every hour that God sends, and we'll sell you access to that data in the form of dirt-cheap targeted ads."

Then Facebook went to the publishers and said, "Remember when we told these suckers that we'd only show them the things they asked to see? Total lie. Post short excerpts from your content and links back to your websites and we'll nonconsensually cram them into the eyeballs of people who never asked to see them. It's a free, high-value traffic funnel for your own site, bringing monetizable users right to your door."

Now, Facebook had to find a way to lock in those publishers. To do this, it had to twiddle. By tiny increments, Facebook deprioritized publishers' content, forcing them to make their excerpts grew progressively longer. As with gig workers, the digital flexibility of Facebook gave it lots of leeway here. Some publishers sensed the excerpts they were being asked to post were a substitute for visiting their sites – and not an enticement – and drew down their posting to Facebook.

When that happened, Facebook could twiddle in the publisher's favor, giving them broader distribution for shorter excerpts, then, once the publisher returned to the platform, Facebook drew down their traffic unless they started posting longer pieces. Twiddling lets platforms play users and business-customers like a fish on a line, giving them slack when they fight, then reeling them in when they tire.

Once Facebook converted a publisher to a commodity supplier to the platform, it reeled the publishers in. First, it deprioritized publishers' posts when they had links back to the publisher's site (under the pretext of policing "clickbait" and "malicious links"). Then, it stopped showing publishers' content to their own subscribers, extorting them to pay to "boost" their posts in order to reach people who had explicitly asked to hear from them.

For users, this meant that their feeds were increasingly populated with payola-boosted content from advertisers and pay-to-play publishers who paid Facebook's Danegeld to reach them. A user will only spend so much time on Facebook, and every post that Facebook feeds that user from someone they want to hear from is a missed opportunity to show them a post from someone who'll pay to reach them.

Here, too, twiddling lets Facebook fine-tune its approach. If a user starts to wean themself off Facebook, the algorithm (TM) can put more content the user has asked to see in the feed. When the user's participation returns to higher levels, Facebook can draw down the share of desirable content again, replacing it with monetizable content. This is done minutely, behind the scenes, automatically, and quickly. In any shell game, the quickness of the hand deceives the eye.

This is the final stage of enshittification: withdrawing surpluses from end-users and business customers, leaving behind the minimum homeopathic quantum of value for each needed to keep them locked to the platform, generating value that can be extracted and diverted to platform shareholders.

But this is a brittle equilibrium to maintain. The difference between "God, I hate this place but I just can't leave it" and "Holy shit, this sucks, I'm outta here" is razor-thin. All it takes is one privacy scandal, one livestreamed mass-shooting, one whistleblower dump, and people bolt for the exits. This kicks off a death-spiral: as users and business customers leave, the platform's shareholders demand that they squeeze the remaining population harder to make up for the loss.

One reason this gambit worked so well is that it was a long con. Platform operators and their investors have been willing to throw away billions convincing end-users and business customers to lock themselves in until it was time for the pig-butchering to begin. They financed expensive forays into additional features and complementary products meant to increase user lock-in, raising the switching costs for users who were tempted to leave.

For example, Facebook's product manager for its "photos" product wrote to Mark Zuckerberg to lay out a strategy of enticing users into uploading valuable family photos to the platform in order to "make switching costs very high for users," who would have to throw away their precious memories as the price for leaving Facebook:

https://www.eff.org/deeplinks/2021/08/facebooks-secret-war-switching-costs

The platforms' patience paid off. Their slow ratchets operated so subtly that we barely noticed the squeeze, and when we did, they relaxed the pressure until we were lulled back into complacency. Long cons require a lot of prefrontal cortex, the executive function to exercise patience and restraint.

Which brings me to Elon Musk, a man who seems to have been born without a prefrontal cortex, who has repeatedly and publicly demonstrated that he lacks any restraint, patience or planning. Elon Musk's prefrontal cortical deficit resulted in his being forced to buy Twitter, and his every action since has betrayed an even graver inability to stop tripping over his own dick.

Where Zuckerberg played enshittification as a long game, Musk is bent on speedrunning it. He doesn't slice his users up with a subtle scalpel, he hacks away at them with a hatchet.

Musk inaugurated his reign by nonconsensually flipping every user to an algorithmic feed which was crammed with ads and posts from "verified" users whose blue ticks verified solely that they had $8 ($11 for iOS users). Where Facebook deployed substantial effort to enticing users who tired of eyeball-cramming feed decay by temporarily improving their feeds, Musk's Twitter actually overrode users' choice to switch back to a chronological feed by repeatedly flipping them back to more monetizable, algorithmic feeds.

Then came the squeeze on publishers. Musk's Twitter rolled out a bewildering array of "verification" ticks, each priced higher than the last, and publishers who refused to pay found their subscribers taken hostage, with Twitter downranking or shadowbanning their content unless they paid.

(Musk also squeezed advertisers, keeping the same high prices but reducing the quality of the offer by killing programs that kept advertisers' content from being published along Holocaust denial and open calls for genocide.)

Today, Musk continues to squeeze advertisers, publishers and users, and his hamfisted enticements to make up for these depredations are spectacularly bad, and even illegal, like offering advertisers a new kind of ad that isn't associated with any Twitter account, can't be blocked, and is not labeled as an ad:

https://www.wired.com/story/xs-sneaky-new-ads-might-be-illegal/

Of course, Musk has a compulsive bullshitter's contempt for the press, so he has far fewer enticements for them to stay. Quite the reverse: first, Musk removed headlines from link previews, rendering posts by publishers that went to their own sites into stock-art enigmas that generated no traffic:

Then he jumped straight to the end-stage of enshittification by announcing that he would shadowban any newsmedia posts with links to sites other than Twitter, "because there is less time spent if people click away." Publishers were advised to "post content in long form on this platform":

https://mamot.fr/@pluralistic/111183068362793821

Where a canny enshittifier would have gestured at a gaslighting explanation ("we're shadowbanning posts with links because they might be malicious"), Musk busts out the motto of the Darth Vader MBA: "I am altering the deal, pray I don't alter it any further."

All this has the effect of highlighting just how little residual value there is on the platform for publishers, and tempts them to bolt for the exits. Six months ago, NPR lost all patience with Musk's shenanigans, and quit the service. Half a year later, they've revealed how low the switching cost for a major news outlet that leaves Twitter really are: NPR's traffic, post-Twitter, has declined by less than a single percentage point:

https://niemanreports.org/articles/npr-twitter-musk/

NPR's Twitter accounts had 8.7 million followers, but even six months ago, Musk's enshittification speedrun had drawn down NPR's ability to reach those users to a negligible level. The 8.7 million number was an illusion, a shell game Musk played on publishers like NPR in a bid to get them to buy a five-figure iridium checkmark or even a six-figure titanium one.

On Twitter, the true number of followers you have is effectively zero – not because Twitter users haven't explicitly instructed the service to show them your posts, but because every post in their feeds that they want to see is a post that no one can be charged to show them.

I've experienced this myself. Three and a half years ago, I left Boing Boing and started pluralistic.net, my cross-platform, open access, surveillance-free, daily newsletter and blog:

https://pluralistic.net/2023/02/19/drei-drei-drei/#now-we-are-three

Boing Boing had the good fortune to have attracted a sizable audience before the advent of siloed platforms, and a large portion of that audience came to the site directly, rather than following us on social media. I knew that, starting a new platform from scratch, I wouldn't have that luxury. My audience would come from social media, and it would be up to me to convert readers into people who followed me on platforms I controlled – where neither they nor I could be held to ransom.

I embraced a strategy called POSSE: Post Own Site, Syndicate Everywhere. With POSSE, the permalink and native habitat for your material is a site you control (in my case, a WordPress blog with all the telemetry, logging and surveillance disabled). Then you repost that content to other platforms – mostly social media – with links back to your own site:

There are a lot of automated tools to help you with this, but the platforms have gone to great lengths to break or neuter them. Musk's attack on Twitter's legendarily flexible and powerful API killed every automation tool that might help with this. I was lucky enough to have a reader – Loren Kohnfelder – who coded me some python scripts that automate much of the process, but POSSE remains a very labor-intensive and error-prone methodology:

https://pluralistic.net/2021/01/13/two-decades/#hfbd

And of all the feeds I produce – email, RSS, Discourse, Medium, Tumblr, Mastodon – none is as labor-intensive as Twitter's. It is an unforgiving medium to begin with, and Musk's drawdown of engineering support has made it wildly unreliable. Many's the time I've set up 20+ posts in a thread, only to have the browser tab reload itself and wipe out all my work.

But I stuck with Twitter, because I have a half-million followers, and to the extent that I reach them there, I can hope that they will follow the permalinks to Pluralistic proper and switch over to RSS, or email, or a daily visit to the blog.

But with each day, the case for using Twitter grows weaker. I get ten times as many replies and reposts on Mastodon, though my Mastodon follower count is a tenth the size of my (increasingly hypothetical) Twitter audience.

All this raises the question of what can or should be done about Twitter. One possible regulatory response would be to impose an "End-To-End" rule on the service, requiring that Twitter deliver posts from willing senders to willing receivers without interfering in them. End-To-end is the bedrock of the internet (one of its incarnations is Net Neutrality) and it's a proven counterenshittificatory force:

https://www.eff.org/deeplinks/2023/06/save-news-we-need-end-end-web

Despite what you may have heard, "freedom of reach" is freedom of speech: when a platform interposes itself between willing speakers and their willing audiences, it arrogates to itself the power to control what we're allowed to say and who is allowed to hear us:

https://pluralistic.net/2022/12/10/e2e/#the-censors-pen

We have a wide variety of tools to make a rule like this stick. For one thing, Musk's Twitter has violated innumerable laws and consent decrees in the US, Canada and the EU, which creates a space for regulators to impose "conduct remedies" on the company.

But there's also existing regulatory authorities, like the FTC's Section Five powers, which enable the agency to act against companies that engage in "unfair and deceptive" acts. When Twitter asks you who you want to hear from, then refuses to deliver their posts to you unless they pay a bribe, that's both "unfair and deceptive":

https://pluralistic.net/2023/01/10/the-courage-to-govern/#whos-in-charge

But that's only a stopgap. The problem with Twitter isn't that this important service is run by the wrong mercurial, mediocre billionaire: it's that hundreds of millions of people are at the mercy of any foolish corporate leader. While there's a short-term case for improving the platforms, our long-term strategy should be evacuating them:

https://pluralistic.net/2023/07/18/urban-wildlife-interface/#combustible-walled-gardens

To make that a reality, we could also impose a "Right To Exit" on the platforms. This would be an interoperability rule that would require Twitter to adopt Mastodon's approach to server-hopping: click a link to export the list of everyone who follows you on one server, click another link to upload that file to another server, and all your followers and followees are relocated to your new digs:

https://pluralistic.net/2022/12/23/semipermeable-membranes/#free-as-in-puppies

A Twitter with the Right To Exit would exert a powerful discipline even on the stunted self-regulatory centers of Elon Musk's brain. If he banned a reporter for publishing truthful coverage that cast him in a bad light, that reporter would have the legal right to move to another platform, and continue to reach the people who follow them on Twitter. Publishers aghast at having the headlines removed from their Twitter posts could go somewhere less slipshod and still reach the people who want to hear from them on Twitter.

And both Right To Exit and End-To-End satisfy the two prime tests for sound internet regulation: first, they are easy to administer. If you want to know whether Musk is permitting harassment on his platform, you have to agree on a definition of harassment, determine whether a given act meets that definition, and then investigate whether Twitter took reasonable steps to prevent it.

By contrast, administering End-To-End merely requires that you post something and see if your followers receive it. Administering Right To Exit is as simple as saying, "OK, Twitter, I know you say you gave Cory his follower and followee file, but he says he never got it. Just send him another copy, and this time, CC the regulator so we can verify that it arrived."

Beyond administration, there's the cost of compliance. Requiring Twitter to police its users' conduct also requires it to hire an army of moderators – something that Elon Musk might be able to afford, but community-supported, small federated servers couldn't. A tech regulation can easily become a barrier to entry, blocking better competitors who might replace the company whose conduct spurred the regulation in the first place.

End-to-End does not present this kind of barrier. The default state for a social media platform is to deliver posts from accounts to their followers. Interfering with End-To-End costs more than delivering the messages users want to have. Likewise, a Right To Exit is a solved problem, built into the open Mastodon protocol, itself built atop the open ActivityPub standard.

It's not just Twitter. Every platform is consuming itself in an orgy of enshittification. This is the Great Enshittening, a moment of universal, end-stage platform decay. As the platforms burn, calls to address the fires grow louder and harder for policymakers to resist. But not all solutions to platform decay are created equal. Some solutions will perversely enshrine the dominance of platforms, help make them both too big to fail and too big to jail.

Musk has flagrantly violated so many rules, laws and consent decrees that he has accidentally turned Twitter into the perfect starting point for a program of platform reform and platform evacuation.

(Image: JD Lasica, CC BY 2.0, modified)

Hey look at this (permalink)

- The Whole of the Whole Earth Catalog Is Now Online https://www.wired.com/story/whole-earth-catalog-now-online-internet-archive/

-

Mysteries For Rats by Penelope Scott https://penelopescott.bandcamp.com/album/mysteries-for-rats

-

Pickup is a sensor power tool https://www.kickstarter.com/projects/supermechanical/pickup-is-a-sensor-power-tool

This day in history (permalink)

#20yrsago My collection reviewed in NYTimes https://www.nytimes.com/2003/10/12/books/science-fiction.html

#20yrsago Homeland Security deports fiancee of Homeland Security staffer https://web.archive.org/web/20031025154021/https://landofthefree.blogspot.com/2003_10_12_landofthefree_archive.html

#10yrsago Phoenix TSA makes breast cancer survivors remove their prostheses https://www.usatoday.com/story/news/nation/2013/10/11/phoenix-airport-screening-draws-angry-complaints/2970589/

#10yrsago W3C’s DRM for HTML5 sets the stage for jailing programmers, gets nothing in return http://radar.oreilly.com/2013/10/what-do-we-get-for-that-drm.html

#5yrsago The US Patent and Trademark Office is ready to hand over an exclusive trademark for “Dragon Slayer” for fantasy novels https://memex.craphound.com/2018/10/12/updated-the-us-patent-and-trademark-office-is-ready-to-hand-over-an-exclusive-trademark-for-dragon-slayer-for-fantasy-novels/

#5yrsago A year later, giant Chinese security camera company’s products are still a security dumpster-fire https://www.zdnet.com/article/over-nine-million-cameras-and-dvrs-open-to-apts-botnet-herders-and-voyeurs/

#5yrsago Blame Big Data for CVS’s endless miles of receipts https://www.vox.com/the-goods/2018/10/10/17956950/why-are-cvs-pharmacy-receipts-so-long

Colophon (permalink)

Today's top sources: Kottke (https://kottke.org).

Currently writing:

- A Little Brother short story about DIY insulin PLANNING

-

Picks and Shovels, a Martin Hench noir thriller about the heroic era of the PC. FORTHCOMING TOR BOOKS JAN 2025

-

The Bezzle, a Martin Hench noir thriller novel about the prison-tech industry. FORTHCOMING TOR BOOKS FEB 2024

-

Vigilant, Little Brother short story about remote invigilation. FORTHCOMING ON TOR.COM

-

Moral Hazard, a short story for MIT Tech Review's 12 Tomorrows. FIRST DRAFT COMPLETE, ACCEPTED FOR PUBLICATION

-

Spill, a Little Brother short story about pipeline protests. FORTHCOMING ON TOR.COM

Latest podcast: The Lost Cause (excerpt) https://craphound.com/news/2023/10/12/the-lost-cause-excerpt/

Upcoming appearances:

- The Internet Con at Moon Palace Books (Minneapolis), Oct 15

https://moonpalacebooks.com/events/30127 -

26th ACM Conference On Computer-Supported Cooperative Work and Social Computing keynote (Minneapolis), Oct 16

https://cscw.acm.org/2023/index.php/keynotes/ -

41st annual McCreight Lecture in the Humanities (Charleston, WV), Oct 19

https://festivallcharleston.com/venue/university-of-charleston/ -

Taylor Books (Charleston, WV), Oct 20, 12h-14h

http://www.taylorbooks.com/ -

Seizing the Means of Computation (Edinburgh Futures Institute), Oct 25

https://efi.ed.ac.uk/event/seizing-the-means-of-computation-with-cory-doctorow/ -

The Internet Con at the Internet Archive (virtual), Oct 31

https://www.eventbrite.com/e/book-talk-the-internet-con-tickets-730939137637

Recent appearances:

- Grim Grinning Ghosts (That Hallowe'en Podcast)

https://thathalloweenpodcast.libsyn.com/grim-grinning-ghosts-with-author-cory-doctorow -

1000 w/Ron Placone

https://podcasters.spotify.com/pod/show/1000wronplacone/episodes/Cory-Doctorow—004-e2a420a -

An Audacious Plan to Halt the Internet's Enshittification (Burning Man Center Camp)

https://www.youtube.com/watch?v=mu2fzvKxKvU

Latest books:

- "The Internet Con": A nonfiction book about interoperability and Big Tech (Verso) September 2023 (http://seizethemeansofcomputation.org). Signed copies at Book Soup (https://www.booksoup.com/book/9781804291245).

-

"Red Team Blues": "A grabby, compulsive thriller that will leave you knowing more about how the world works than you did before." Tor Books http://redteamblues.com. Signed copies at Dark Delicacies (US): and Forbidden Planet (UK): https://forbiddenplanet.com/385004-red-team-blues-signed-edition-hardcover/.

-

"Chokepoint Capitalism: How to Beat Big Tech, Tame Big Content, and Get Artists Paid, with Rebecca Giblin", on how to unrig the markets for creative labor, Beacon Press/Scribe 2022 https://chokepointcapitalism.com

-

"Attack Surface": The third Little Brother novel, a standalone technothriller for adults. The Washington Post called it "a political cyberthriller, vigorous, bold and savvy about the limits of revolution and resistance." Order signed, personalized copies from Dark Delicacies https://www.darkdel.com/store/p1840/Available_Now%3A_Attack_Surface.html

-

"How to Destroy Surveillance Capitalism": an anti-monopoly pamphlet analyzing the true harms of surveillance capitalism and proposing a solution. https://onezero.medium.com/how-to-destroy-surveillance-capitalism-8135e6744d59 (print edition: https://bookshop.org/books/how-to-destroy-surveillance-capitalism/9781736205907) (signed copies: https://www.darkdel.com/store/p2024/Available_Now%3A__How_to_Destroy_Surveillance_Capitalism.html)

-

"Little Brother/Homeland": A reissue omnibus edition with a new introduction by Edward Snowden: https://us.macmillan.com/books/9781250774583; personalized/signed copies here: https://www.darkdel.com/store/p1750/July%3A__Little_Brother_%26_Homeland.html

-

"Poesy the Monster Slayer" a picture book about monsters, bedtime, gender, and kicking ass. Order here: https://us.macmillan.com/books/9781626723627. Get a personalized, signed copy here: https://www.darkdel.com/store/p2682/Corey_Doctorow%3A_Poesy_the_Monster_Slayer_HB.html#/.

Upcoming books:

- The Lost Cause: a post-Green New Deal eco-topian novel about truth and reconciliation with white nationalist militias, Tor Books, November 2023

-

The Bezzle: a sequel to "Red Team Blues," about prison-tech and other grifts, Tor Books, February 2024

-

Picks and Shovels: a sequel to "Red Team Blues," about the heroic era of the PC, Tor Books, February 2025

-

Unauthorized Bread: a graphic novel adapted from my novella about refugees, toasters and DRM, FirstSecond, 2025

This work – excluding any serialized fiction – is licensed under a Creative Commons Attribution 4.0 license. That means you can use it any way you like, including commercially, provided that you attribute it to me, Cory Doctorow, and include a link to pluralistic.net.

https://creativecommons.org/licenses/by/4.0/

Quotations and images are not included in this license; they are included either under a limitation or exception to copyright, or on the basis of a separate license. Please exercise caution.

How to get Pluralistic:

Blog (no ads, tracking, or data-collection):

Newsletter (no ads, tracking, or data-collection):

https://pluralistic.net/plura-list

Mastodon (no ads, tracking, or data-collection):

Medium (no ads, paywalled):

(Latest Medium column: "What to do with the Democrats: They want to do it? Let's make them do it https://doctorow.medium.com/what-to-do-with-the-democrats-011c1dd677d6)

Twitter (mass-scale, unrestricted, third-party surveillance and advertising):

Tumblr (mass-scale, unrestricted, third-party surveillance and advertising):

https://mostlysignssomeportents.tumblr.com/tagged/pluralistic

"When life gives you SARS, you make sarsaparilla" -Joey "Accordion Guy" DeVilla

Per the current trend, people are taking an idea and getting SUPER offended by it. Instead of stopping, doing some thinking, maybe *gasp, some introspection, they just fire off all these lies about the idea to get others also against it, when in reality, the idea would actually help a lot of people.

The idea of “toxic masculinity” seems to really peeve a lot of people off and start clamoring that it means being “manly” is bad and all men should start acting feminine. This is not at all what that phrase means. That would be akin to saying “apple seeds contain cyanide” means “you shouldn’t eat apples”. “Toxic masculinity” is just taking masculinity too far and pushing it onto others, causing a toxic atmosphere that leads to more problems in society as well as personal lives.

So, masculinity is OKAY. If you’re into that. Lifting weights, going hunting, playing sports, wearing camo, shooting guns. wanting to be protective of women and children, whatever else is considered masculine – these are all perfectly acceptable and even desirable by a certain population. If you’re into it, and it’s not hurting others, GREAT! NO one is telling men they shouldn’t be who they want to be. If being a stereotypical manly man is your thing, I love it. People in general love it. You be you!

What’s NOT okay is the idea that NOT being perfectly masculine is a bad thing and something to be shamed. What’s toxic is shaming men or boys who, for instance, take dance, like pink, cook, do laundry, help take care of their own kids (without calling it babysitting), or worst of all, show emotion. This leads to de-humanizing of people and the trapped feeling men can get from not being able to be themselves for fear of being shamed, bullied, or even physically beaten.

One of the things I see on a daily basis is men in the Euthanasia room, trying their hardest to hold back emotion and tears when they have to say goodbye to their best friend of 15 years. Why should men feel the need to hide that? Why shouldn’t they be allowed to cry without feeling shame? Having an old man, from the “Greatest generation” apologize and look ashamed of himself because he lets his face break for even a moment while trying to remain calm and collected and say goodbye to his best old buddy is heart wrenching.

Or my dad, who was always telling us that we didn’t need a therapist and/or anti-depressants/anxiety meds – we just needed to get out and exercise or eat right or find a hobby. Then, just a few months ago, he called me up to tell me about how he finally went and saw a doctor about anxiety and got on some medication and – can you imagine? ! – it helped SO much! He couldn’t believe the difference it could make!

Could you imagine a world where men were allowed to show emotion? Be themselves? Not have to fit into a tiny mold of what men are SUPPOSED to be? Could you imagine how much less violence there might be in the world? How much less pent up frustration? Can you see the irony in women being more brave to break out of their traditional roles than men are? This is why we give men who show something outside the traditional range of manliness so much attention – it’s called positive reinforcement. It’s not that being “manly” is bad, it’s just that being yourself should be celebrated and people who are being themselves are being SO brave!

So, you want to be a manly man? Have golden calf testicles hanging off your ridiculously large truck that you drive like someone released a bee hive in your cab, and then, inevitably complain about gas prices? Great! Good for you! No one is telling you that’s bad (except, maybe, the environmentalists). But you should also not feel like you have to hold back your tears as you pay the $150 to drive that truck to town and back. Nor should you ruin your son’s life by making him feel like “men don’t cry” and turn him into a domestic violence psychopath. Emotions are okay. Men doing the dishes and being a caretaker is OKAY.

Be a man! Be brave! Be you! Just don’t be a dick. That’s all.

The post “Toxic Masculinity” ≠ “Masculinity is toxic” first appeared on This Little Light.

When using Windows 11 you probably have noticed the widget or weather icon on the taskbar. in the lower left corner. The widget popups when you hover over the icon with your mouse, showing you the latest news, stocking info, and weather. Most people find ... Read moreHow to Disable Widgets in Windows 11 Completely

The post How to Disable Widgets in Windows 11 Completely appeared first on LazyAdmin.

Crypto researcher Tayvano posted a Twitter thread about a massive, mysterious wallet draining operation that has siphoned more than 5,000 ETH (~$9.88 million at today's prices) as well as other tokens and NFTs from wallets across more than eleven blockchains since December 2022. The operation appears to target more sophisticated crypto users, but the mechanism of attack is unclear. The researcher hypothesized that "someone has got themselves a fatty cache of data from 1+ yr ago & is methodically draining the keys as they parse them from the treasure trove", but emphasized that that was only speculation.